Artificial intelligence (AI) has considerably simplified all spheres of our lives, particularly the learning process. ChatGPT and other AI tools can answer almost every question and write essays on any topic you provide. Many students perceive AI as an opportunity to avoid custom writing their text on their own. However, the appearance of AI tools has made people face another question: how to not get caught using ChatGPT or any other applications to prevent problems in colleges and universities.

The popularization of these tools has caused the occurrence of AI detection platforms such as GPTZero, Winston AI, Copyleaks, Sapling AI Detector, and others. What do you think – can you get caught using ChatGPT? Let’s analyze why there is a high chance of facing severe issues when using AI and how to make your essay not AI detectable.

For students seeking assistance with complex assignments, such as business plan writing services, it’s crucial to ensure the final product reflects their own understanding and effort.

Why It’s Important to Know How to Avoid AI Detection in Writing

All students are probably afraid of detecting plagiarism in their papers because this situation may adversely affect their score and their overall chances of graduating successfully. As a writer, I always recommend that students use plagiarism detection systems to check their writing for originality before submitting their works. This simple rule allows us to avoid violating academic integrity, show our respect to other authors’ contributions to the field we explore — for example, when working with complex research topics in data mining — and deliver original papers without plagiarism that tend to provide us with the highest score. Using plagiarism detection tools allows us to make sure that we’re not going to face any problems after submitting our writing.

I know universities’ attitude toward AI is the same as plagiarism: teachers always expect their students to develop their writing skills and learn to express their own opinions, not the ones created by other people or systems. Human thoughts and ideas are highly valued today because their originality and creativity cannot be replaced by any AI tool—and schools are increasingly defining policies around how schools regulate the use of technology, reflecting concerns not only about AI but also about phone use in learning environments. Using such applications can make your teacher believe that you lack interest in your field of study or skills to write a paper with your own opinion. I always emphasize the necessity of checking the work using AI detection as a writer must always prove their paper’s originality.

If you aim to understand how to avoid AI detection in writing, using plagiarism or detection AI systems before submitting your paper can help you improve the quality of your text, while the originality of your writing will be really impressive.

However, let’s recognize that AI tools make our lives easier and significantly simplify the writing process. This explains why most students want to understand how to get around AI detector. I don’t recommend using ChatGPT or other AI applications when working on your paper; still, if you decide to generate the content utilizing such a tool, always use AI detection tools to check your text and avoid problems that may negatively affect your learning experience.

AI generated content can be a source of inspiration for you or an example of what may be written. Try to use it to understand the topic better or get an idea of what you can include into your paper, but avoid simply copying and pasting this text into your file. Knowing how to make something not AI detectable can make your life easier and considerably speed up the writing process. Therefore, always check your content using plagiarism or detection AI systems to receive the highest score for your efforts and submit human written plagiarism free papers.

Similarly, utilizing a professional business report writer can provide valuable guidance, but the content should be personalized to maintain academic integrity.

Practical Tips on How to NOT Get Caught Using ChatGPT

If you really enjoy using ChatGPT or any other similar tool, let’s focus on how to avoid AI detection in writing:

- Use AI detection platforms such as Copyleaks or AI Detector by CustomWritings.com. The more detection tools you utilize, the better result you will receive: each tool may highlight different phrases or sentences, but it’s essential to paraphrase all the parts that can be potentially marked as AI generated content. Remember that using AI tools may save time in terms of writing, but you’ll need much more time to review and edit the text to prove that it’s human written and receive 0% AI detection when using online tools.

- Utilize an AI rewriter to avoid AI detection. AI development and rapid progress really impress me: people have created not only a tool to generate content but also an application to paraphrase the text developed by AI to make it sound like a human written part. For example, try to use Quillbot: this application focuses on strengthening your writing, making your language more professional, correcting your structure, removing repetitive words, and enhancing your punctuation and spelling.

- Take advantage of Quillbot. This tool helps me spend less time editing and proofreading the text since it shows most of my mistakes and provides a valuable tip for each part or sentence that can be improved. Moreover, the primary advantage of Quillbot is its ability to enhance your content to make it sound like human written text, even if you have used an AI tool to generate specific parts. You may wonder, “Can you get caught using ChatGPT?” My answer would be, “If you use applications such as Quillbot, you’ll be less likely to face AI detection when checking your paper.”

- Use formulaic language. If you want to know how to avoid getting caught using ChatGPT, my writing experience shows that formulaic language can help you to prevent highlighting your content as AI generated. Formulaic language includes verbal expressions typical for conversational speech. Specifically, formulaic phrases are widely used in human language and not incorporated into the content an AI tool tends to generate. For example, formulaic expressions include:

- I’ll get back to you later.

- Can I come in?

- Are you okay?

However, remember that such expressions cannot be used in formal essays due to academic requirements. If you use ChatGPT to generate a text for your email, including formulaic language into your content will be an excellent idea.

Some SEO specialists also use ChatGPT to create content for their websites. If you aim to make your website valuable and useful for your users, consider that the primary goal of SEO is to meet consumers’ needs and help them find the information they need.

AI generated content is frequently weaker and less interesting to read than human written text. Therefore, even if you use an AI tool for SEO purposes, check whether each sentence is understandable to readers.

The most important tip I’d recommend SEO specialists to remember is that the content they share with their users must be exciting and meet their interests and expectations.

Recommended reads

Examples of How to Get Around AI Detector

You’ll definitely face AI detection when copying and pasting the content generated by ChatGPT directly into your paper and avoiding a review before submitting. Nevertheless, appropriate changes in the structure of your text, replacing repetitive words with their synonyms, a thorough analysis of each sentence, and including formulaic language will make you less likely to get caught by AI detection when using ChatGPT. Let’s concentrate on some examples of how to use ChatGPT and not get caught.

I asked ChatGPT to create content on the topic, “Why is education important?” Here is what I received:

| “Education is crucial for students as it equips them with the knowledge and skills necessary to navigate life’s challenges and opportunities. It fosters intellectual growth, critical thinking, and problem-solving abilities, which are invaluable in both personal and professional spheres.” |

AI detection systems, such as GPTZero, highlight this part as AI generated. You may ask, “How to get past AI detector?” Let’s try to change the structure of each sentence and replace some words with their synonyms if possible. For example, the writer can use the following part in the paper to avoid AI detection when checking whether the text is human written:

| “Education significantly influences people’s lives because it helps them gain critical knowledge and skills to overcome multiple obstacles effectively. Moreover, education positively impacts individuals’ self-development, allowing them to develop their critical thinking, coping skills, and intellectual abilities, while this progress can play a vital role in humans’ personal and professional spheres.” |

This part is less likely to show AI detection when using online tools. Even if you avoid using ChatGPT or similar tools when writing your content, it’s better to check your text through AI detection as a writer should always make sure that the paper doesn’t include the parts generated by AI. Let’s consider some crucial points on how to avoid getting caught using ChatGPT.

Can you get caught using Chat GPT if you have changed the structure of the content and replaced repetitive words?

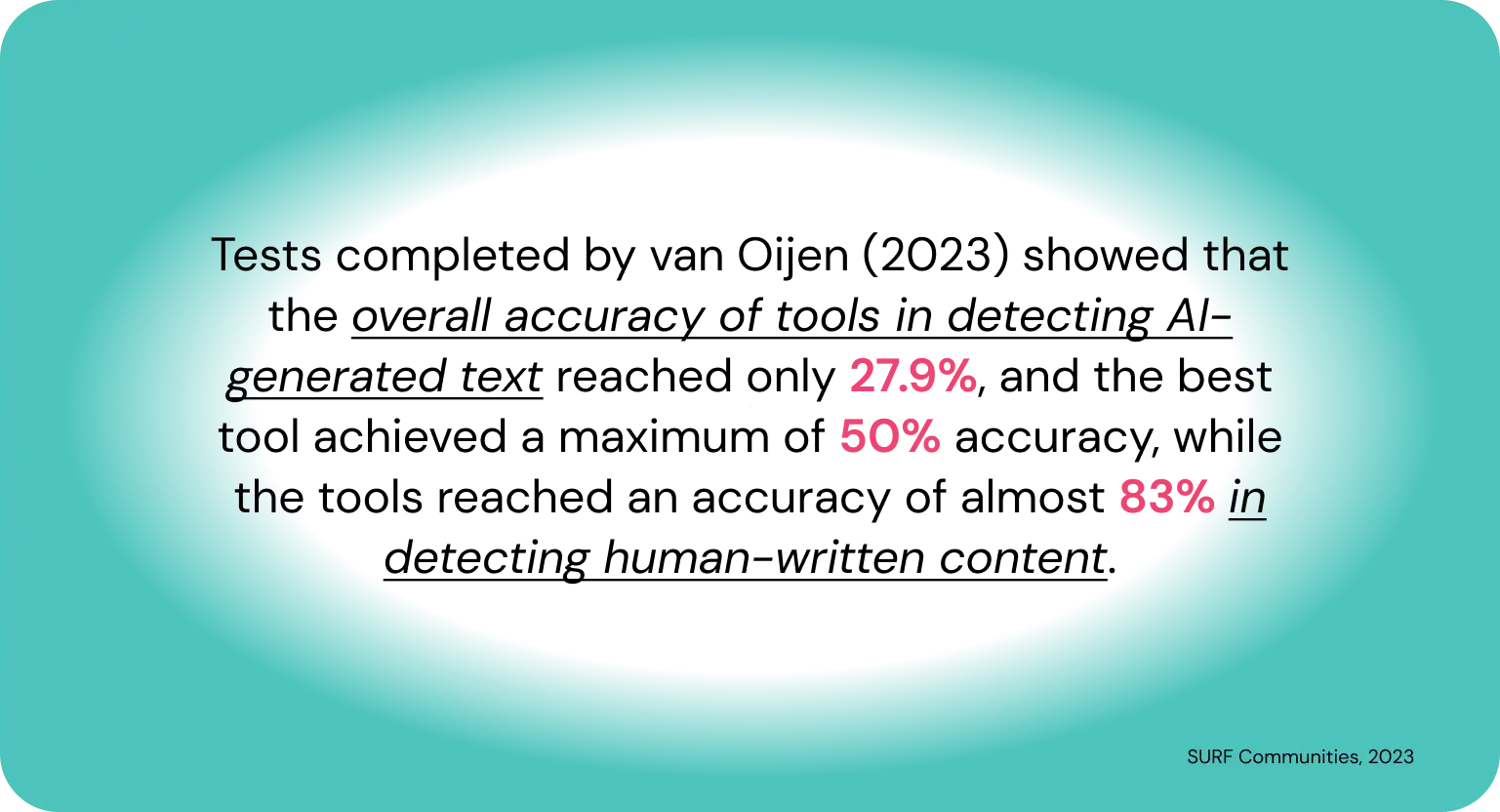

Yes, even changes within the word choice and structure cannot guarantee that your text won’t be highlighted as AI generated content. However, remember that AI detection systems are not perfect, which explains why they frequently mark the parts written by humans as AI generated. Avoiding using ChatGPT and other tools still cannot prevent you from seeing some parts of your content as generated by AI. That’s why it’s essential to check each paper before submitting it to avoid unnecessary issues.

How to Avoid Detection:

- Don’t rely on ChatGPT:

Use ChatGPT as a brainstorming tool or to get a draft going, but always add your own thoughts, opinions and examples. This will make it less predictable for detectors. - Run it through AI detectors:

Before you submit, run it through tools to see what parts of the text will be flagged as AI. Then you can make the necessary adjustments. - Paraphrase and edit:

After using ChatGPT, thoroughly paraphrase it, change sentence structure, add synonyms and include your own examples. This will make it more human and less detectable. - Use humanizing tools:

There are special tools that can make AI-generated text more human-like. For example, Undetectable AI can make your text less predictable for detectors. - Add unique content:

Add your own research, thoughts, analysis and examples. This will not only make your work better but also less like standard AI-generated text. - Don’t be too formal and uniform:

AI tends to generate text with formal style and uniform structure. Add conversational phrases, rhetorical questions and vary sentence lengths to make it more natural. - Run it through plagiarism tool:

Although ChatGPT generates unique text, it’s always good to check your work for plagiarism to be original and avoid confusion.

Are there any strict and clear rules on how to make your essay not AI detectable relevant for all cases?

No, the most effective way to prevent highlighting your text as AI generated is to check each part of your text using AI detection systems before you ask your teacher to accept it. You never know what level of AI may be detected in your paper, so there are no clear recommendations on how to avoid AI detector. Try to use AI tools as a source of inspiration instead of the primary generator of your text, paraphrase all the parts ChatGPT gives you, and thoroughly check your paper using plagiarism and AI detection systems before submitting the work. Following these tips, you will definitely prove the originality of your writing and receive the highest score for your efforts.

FAQ:

Can Tools Detect if a Text Was Written by ChatGPT?

Yes, there are tools that can tell if a text was made by AI.

What Happens if I Use AI Texts Without Adjusting?

In an academic environment, this can lead to plagiarism or dishonesty charges and ruin your reputation and grades.

How Can I Check if My Text Is Detected as AI?

Use AI detection tools like Copyleaks or AI Detector from CustomWritings.com to check your text.

Is It Okay to Use ChatGPT for Academic Purposes?

ChatGPT is okay as a tool to generate ideas or organize your thoughts. But not entirely rely on it without your own input.